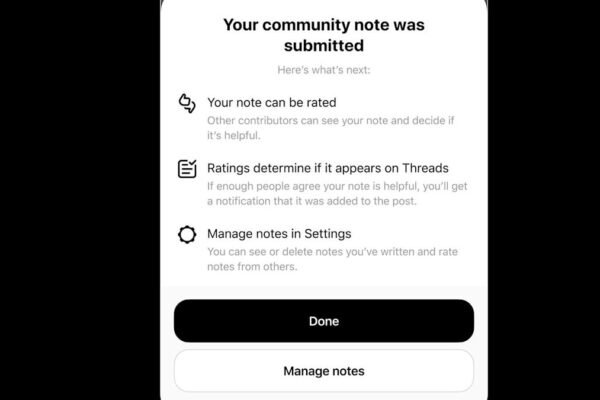

Oversight Board tells Meta expanding Community Notes outside of US poses ‘significant’ risks

Meta didn’t consult its Oversight Board last year when it announced sweeping policy changes to content moderation and a rollback of third-party fact checking in the United States in favor of Community Notes. But the company did ask the board for advice on how to expand the crowd-sourced fact checks to other countries. Now the…