The AI boom is coming for the Switch 2

Data centers are eating up computing resources and pushing chipmakers toward AI-grade memory, tightening supply for Nintendo and other hardware makers

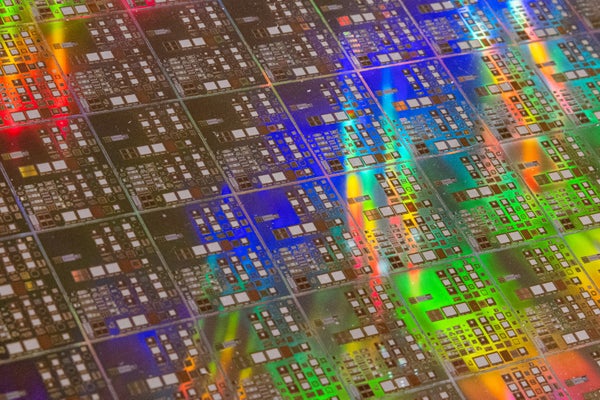

Silicon wafers like the one pictured here are the raw canvas for both consumer memory and high-end AI processors. Supply is running low.

Annabelle Chih/Stringer/Getty Images

The Nintendo Switch 2 and an artificial intelligence data center don’t look remotely alike. Yet they depend on the same crucial component—dynamic random-access memory, or DRAM. It is the fast-working memory in computers that allows them to run today’s applications. Cloud computing giants such as Microsoft and Google are buying up memory hardware at data-center scale to build out AI clusters, tightening the supply for everyone else. That includes Nintendo.

On an earnings call on Tuesday, Nintendo president Shuntaro Furukawa said higher memory prices had not significantly affected the company’s results for the current financial year, but he warned that if prices stay elevated the costs could start cutting into profitability. The company’s shares promptly slid nearly 11 percent in Tokyo.

DRAM stores data bits as tiny electrical charges. Those charges leak away, and the system refreshes them constantly. Think of it as a whiteboard where the ink fades over time and the system keeps tracing over the same words so they remain readable. Video games lean on that capability to keep gameplay smooth and responsive. A console like the Switch 2, for example, uses DRAM as a staging ground for whatever data the processor and graphics chip need next, from lighting and character positions to animation timing and collision calculations.

On supporting science journalism

If you’re enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

According to a spec breakdown posted by Digital Foundry in May, the Switch 2 uses 12 gigabytes of LPDDR5X, a mobile-friendly standard DRAM that was designed to quickly move lots of data without also rapidly draining the handheld battery.

AI data centers also need DRAM but at much larger quantities. Their servers pack standard DRAM for the central processing units that perform tasks such as scheduling work and moving data around the system. The compute-heavy chips that run AI—often graphics processing units such as Nvidia’s—require another form of memory made from DRAM called high-bandwidth memory, or HBM.

HBM is DRAM engineered for the data firehose that AI accelerator chips demand. To make HBM, manufacturers stack DRAM chips vertically and connect them with tiny channels etched through silicon that carry signals between layers. HBM sits next to the accelerator and feeds it data fast enough to keep the chip computing.

So why not manufacture more DRAM? The problem is that manufacturers cannot spin up memory capacity overnight. HBM and consumer DRAM start from the same raw silicon materials and share much of the same supply chain. But HBM also demands specialized packaging and commands higher prices, so manufacturers have prioritized these AI-focused memory products.

Industry leaders have warned that supply chain constraints could persist into next year; those constraints will shape the performance that consumer hardware like the Switch 2 can comfortably deliver. Chipmakers such as Samsung and SK Hynix are trying to close the DRAM gap, but those projects take years. Until then, Nintendo and other device makers can absorb costs, raise prices, trim bundles—or make quiet spec trade-offs.

It’s Time to Stand Up for Science

If you enjoyed this article, I’d like to ask for your support. Scientific American has served as an advocate for science and industry for 180 years, and right now may be the most critical moment in that two-century history.

I’ve been a Scientific American subscriber since I was 12 years old, and it helped shape the way I look at the world. SciAm always educates and delights me, and inspires a sense of awe for our vast, beautiful universe. I hope it does that for you, too.

If you subscribe to Scientific American, you help ensure that our coverage is centered on meaningful research and discovery; that we have the resources to report on the decisions that threaten labs across the U.S.; and that we support both budding and working scientists at a time when the value of science itself too often goes unrecognized.

In return, you get essential news, captivating podcasts, brilliant infographics, can’t-miss newsletters, must-watch videos, challenging games, and the science world’s best writing and reporting. You can even gift someone a subscription.

There has never been a more important time for us to stand up and show why science matters. I hope you’ll support us in that mission.