Isaac Asimov’s three laws of robotics are not a practical guide

Entertainment Pictures/Alamy

Super-intelligent artificial intelligence rising up and wiping out humanity has been a common trope in science fiction for decades. Now, we live in a world where real AI seems to be advancing faster than ever. Does that mean you should start worrying about an AI apocalypse?

Unlike other existential risks such as climate change, the risks posed by AI are hard to quantify. We are in speculative territory simply because we have much less understanding of the situation than we do of climate patterns.

What we do know for certain is that a lot of very smart people are worried. Many of today’s AI company bosses have warned of the possibility of AI leading to human extinction, and even the pioneer of machine intelligence, Alan Turing, spoke of a future in which computers become sentient, before outstripping our abilities and finally taking over.

The scenario plays out something like this. Imagine we give an AI the sole task of solving a big, meaty problem like the Riemann hypothesis, one of the most famous unsolved problems in mathematics. It could decide that what it needs is lots and lots of computing power and, unconstrained by common sense, set about turning every inanimate object on Earth into one huge supercomputer, leaving 8 billion of us to starve to death in a vast, sterile data centre. It might even use us as raw material, too.

Now, you could argue that in this scenario, we might notice what the AI was doing and give it a quick nudge by saying, “By the way, it looks like you’re turning the whole world into a data centre and, if that’s the case, please stop, because we still need to live on Earth.” But some people might prefer to have safeguards in place to spot this kind of issue before it happens and prevent any harm.

Sci-fi writer Isaac Asimov famously had a crack at this with his three laws of robotics, the first of which is that a robot may not injure a human being or, through inaction, allow a human being to come to harm.

So, in theory, we can just tell AI not to harm us, and it won’t, right? Well, no. Our ability to build safeguards and rules into AI is clumsy and ineffective. We can tell today’s large language models not to be racist, or swear, or divulge the recipe for explosives, but in the right circumstances, they’ll go right ahead and do it anyway. We simply don’t understand what happens inside an AI model well enough to prevent it doing things we don’t want it to do.

Even if we did sort all of that out, you still have a scenario where an AI model just decides to take us out on purpose – the Terminator or Matrix scenario. This could come about after very gradual improvements in AI over long periods, or almost instantaneously with a singularity – the hypothetical process whereby an AI becomes smart enough to improve itself, then rapidly iterates at a great pace, getting smarter and smarter, surpassing human intelligence in the blink of an eye.

And AI might decide to do this because it fears we’d turn it off, or because it doesn’t want to be bossed around by us, or simply because it thinks Earth would be better off without us getting in the way and messing things up – a sentiment that a lot of animal and plant species may well share if they were able.

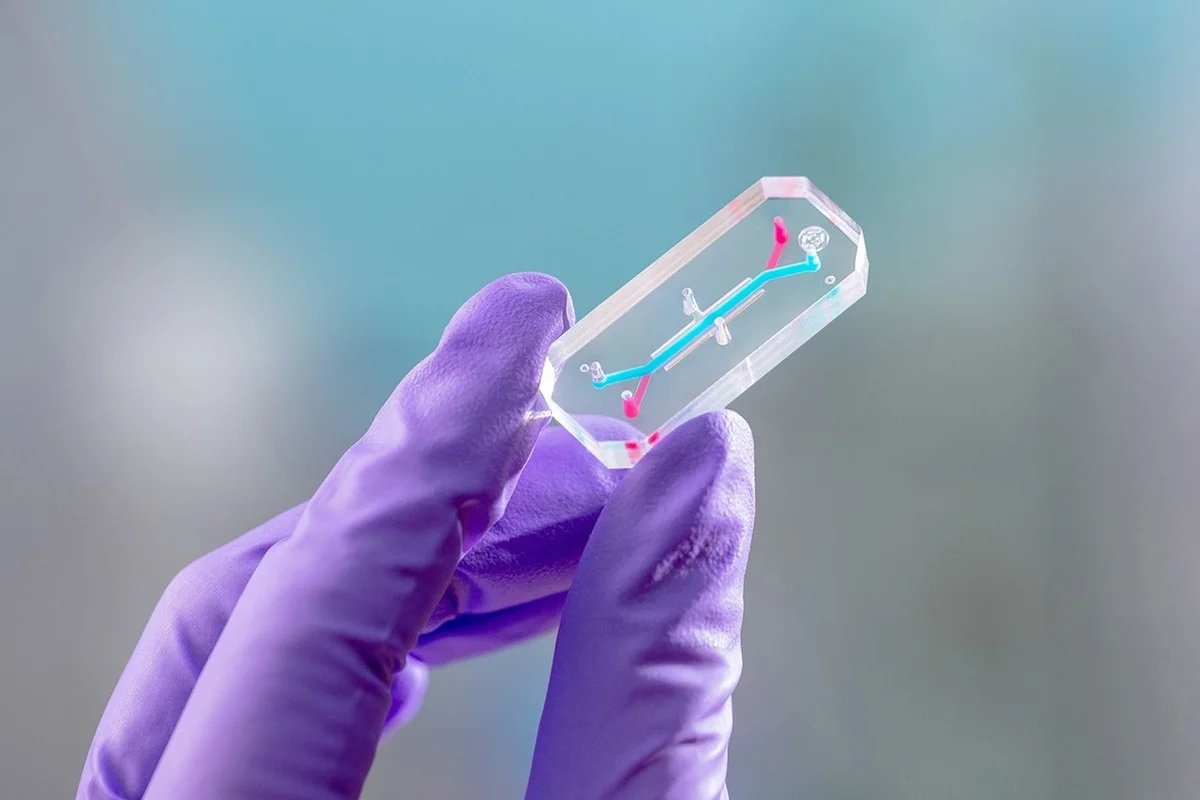

It could do this by using an automated biology lab to create a deadly virus, by triggering the world’s stockpile of nuclear weapons or by constructing an army of killer robots – or just hijacking the ones governments are already building. Perhaps it could even do something so nefarious, clever and sneaky that we haven’t even thought of it yet.

In reality, this might be tricky. An AI might want to eradicate humans, but it would have limited levers to pull. Yes, it could make all traffic lights green and take out a few of us via traffic accidents. It could cause power outages that might get a few more. It could crash some planes. But taking out 8 billion people, all at once? Not an easy task. And it might well have to fend off other AI models that are trying to stop its murderous plans from succeeding.

While many of these scenarios feel like impossible science fiction or implausible thought experiments, experts do disagree about how likely they are. And that in itself should give us pause for thought.

Right now, companies with vast investment, humongous resources and teams of some of the brightest people on the planet are racing to build a superintelligent AI. Whether you think that will come soon or not, and whether it will have negative outcomes or not, we can perhaps agree that if some people do, then it might be a good idea to slow down and think carefully before carrying on. Unfortunately, capitalism isn’t a system that’s very good at carefully considering the consequences before innovating, and today’s politicians seem so keen on the potential economic upsides of AI that regulation isn’t the priority.

So, how likely is a disaster? A 2024 paper that surveyed almost 3000 published AI researchers revealed that more than half thought the chance of AI causing human extinction or permanent and severe disempowerment – the so-called p(doom) or probability of doom – was at least 10 per cent. I don’t know about you, but I’d really have preferred that number to be much smaller.

Some people working on AI are optimistic about the future, and some experts think it will be the end of humanity. Worryingly, we’re doing it anyway.

Personally, I’m of the school of thought that there’s nothing inherently magical about the human brain and our consciousness; certainly, it’s nothing that can’t be replicated artificially. So, on a long enough timescale, we will likely create an artificial intelligence that hugely outstrips the ability of humans. But I also think that we’re a long, long way from understanding what that would even involve, let alone accomplishing it.

I certainly don’t believe that current models are anywhere near the slippery slope of a singularity – they can’t even count to 100 reliably – and I’m not losing sleep about the whole thing.

But – and it’s a big but – that’s not to say that AI isn’t bringing imminent problems.

Perhaps the AI apocalypse we should be worrying about is actually massive job losses caused by automation, or the gradual loss of human skill as AI takes over more and more tasks, or the further homogenisation of culture, stemming from AI-generated art, music and film.

Or perhaps it’s a global recession caused by a collapse in the share price of technology firms that have convinced investors to hand over billions with inflated promises of super-intelligent machines that are years further down the line than claimed. Those scenarios feel a lot more likely to me, and a lot closer.

Topics: