In late March some of the heaviest users of Anthropic’s Claude large language models began posting screenshots of a strange new scarcity: they were reaching five-hour usage limits in 20 minutes. Complaints spread across Reddit, GitHub and X. Anthropic told subscribers that their sessions would burn through usage limits faster during peak hours. The company also blocked some third-party tools, including OpenClaw, from drawing on its flat-rate subscription limits. Several weeks earlier Boris Cherny, who leads Claude Code, said that a default setting for how the model thinks had been lowered.

Users immediately questioned why a paid AI tool was suddenly giving them less. Had the AI boom begun to outrun the machinery needed to sustain it?

The pressure is not limited to Anthropic. OpenAI has begun shuttering Sora, its video-generation platform, as the number of developers using its coding assistant Codex has surged to four million per week. Investors and developers are now talking about a “compute crunch,” the possibility that demand for AI is growing faster than companies can build data centers and power them.

On supporting science journalism

If you’re enjoying this article, consider supporting our award-winning journalism by subscribing. By purchasing a subscription you are helping to ensure the future of impactful stories about the discoveries and ideas shaping our world today.

The stakes are larger than causing frustration for developers. If AI becomes the everyday interface for coding, science, learning, medicine, customer service, defense planning and office work, then access to compute becomes access to economic speed. And limits are starting to show up in the products people use.

The numbers are already steep. In a July 2025 white paper, Anthropic projected that the U.S. AI sector will need at least 50 gigawatts of electric capacity by 2028 to maintain global AI leadership—roughly the output of 50 large nuclear reactors. The International Energy Agency projects that global data-center electricity use is on track to double by 2030.

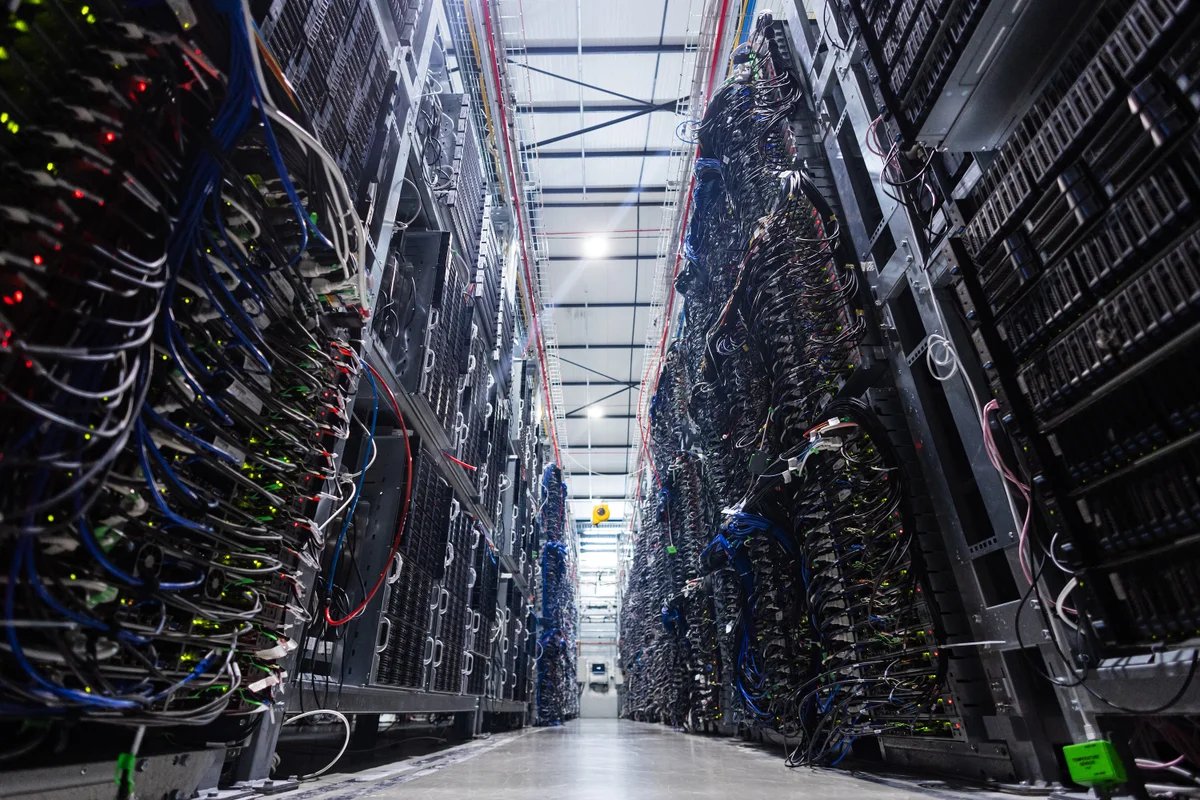

Compute is not new. Every chat with Claude or GPT runs on the same underlying machinery that calculates spreadsheet totals and renders video games—silicon wafers etched with billions of microscopic switches, organized into specialized processors. Training a frontier model can require tens of thousands of these processors running for weeks or months. Once the model is trained, using it also consumes compute each time someone asks a question. That demand now reaches across the supply chain. On January 15 Taiwan Semiconductor Manufacturing Company (TSMC), which fabricates most of the world’s advanced AI chips, announced it would spend up to $56 billion this year alone to expand capacity. Customers are still asking for more.

AI policy expert Lennart Heim is a useful guide to this machinery. He formerly led compute research at the RAND Center on AI, Security, and Technology and cofounded Epoch AI, which tracks the resources behind frontier AI models. His beat is where a cloud dashboard becomes a construction project—where digital demand collides with factories, transformers, chips and cables.

[An edited transcript of the telephone interview follows.]

Developers are saying the rate limits and blocked third-party tools look like a compute crunch. What does a compute shortage actually mean?

When we say “compute,” we mean computing power. For AI, training compute scales with model size: bigger neural networks need more data, and more data needs more processing power. What was underreported for years is that the same relationship holds for deployment. Running the model for users—inference—is incredibly compute-intensive because bigger models need more computing power to serve. So if more people use AI with more tokens and more intensity, you need more compute. If 10 times more people use AI 10 times more heavily, you need close to 100 times more compute.

Why does a flat-rate subscription break down for AI in a way it didn’t for earlier Internet services?

The Internet runs on flat-rate subscriptions: you pay $20 a month and get effectively unlimited use. That works when the marginal cost per user is low—a Google Workspace power user doesn’t cost Google much more than a light user. With AI, it breaks. Using AI 10 times more heavily costs the provider roughly 10 times more money. Paying per token means you literally pay for your resources; paying $20 flat means you’re often burning more compute than $20 can buy. That’s why we see rate limits mostly on monthly subscription plans. At some point, you have to rate limit.

Beyond rate limits, what levers do these companies have to control how much compute users consume?

They have multiple levers. If you use ChatGPT, it defaults you to a mode called Auto: you ask a question, and ChatGPT figures out which model should answer. Is it a really smart model that thinks for a long time, or are you just asking about the weather—in which case it can give you an immediate answer. Anthropic started defaulting to Claude Sonnet, which is a smaller, less powerful model. It works more cheaply, but you also get less intelligence out of it.

People also aren’t using these tools efficiently. It’s like asking Albert Einstein how to open a bottle of wine.

OpenAI’s Codex offers more usage for the money than Claude Code. Is that sustainable, or are we going to see everyone move toward more restrictive plans?

OpenAI has been the company with more money and higher valuation, and they simply have more compute. Building a data center is hard; building chips is maybe the hardest thing in the world. Even if OpenAI stopped developing good models tomorrow, they have a ton of compute, and that gives them a ton of power.

Anthropic’s problem is that data centers are incredibly expensive—you have to pay so much to NVIDIA—and if you overbuild, you’ve spent huge sums on unused capacity. You want to build exactly as much as you need, but you can’t forecast it.

The future will continue to be somewhat compute-constrained, and eventually market mechanisms solve it: you raise the price. Right now I’d say that these companies prefer to rate limit, so everybody gets the experience, rather than raise prices.

Walk me through the supply chain. What are the biggest bottlenecks that prevent AI companies from simply building more compute?

Software companies have historically been able to scale 10 times or 100 times on short notice because they weren’t bound by physical constraints—that’s the Silicon Valley ethos. But if we had 100 times more AI users tomorrow, we just wouldn’t have enough compute to serve them.

That mindset runs straight into the supply chain. For instance, TSMC is a company where if they build a factory without a customer and it isn’t 80 percent utilized, they go bankrupt. Sam Altman shows up saying he needs 100 times more chips, and they say, “You’re crazy.” That’s partly why we have a compute shortage.

Same thing further down the chain: once you have the chips, you need power—you need gas turbines. You go to the gas turbine manufacturers and say, “We need N times more gas turbines,” and they say, “You’re kidding me—this industry has been flat for the last decade.” That’s where the digital world meets the physical world. Right now we don’t have enough memory. A lot of it will go to AI chips, which means memory prices rise, and your smartphone next year costs more. Companies want to build more memory and don’t have enough clean-room space. They need special factories, so-called “fabs”—but only a handful of companies in the world can build those fabs, and they’re all fully booked.

Are training models and answering user queries competing for the same resources?

Companies want to build bigger, more capable systems so they can raise more money and eventually build AGI—and at the same time, they want to make money right now. Inference spikes when everyone is awake and using it; training is continuous.

A better frame is probably not training versus inference but R&D compute versus serving compute—people need to test ideas. Recent reports suggested the majority—something like 60 percent—was R&D compute. That highlights how these companies are constantly trading off between building better products and allocating compute to users.